Researcher hacks self-driving car sensors

The multi-thousand-dollar laser ranging (lidar) systems that most self-driving cars rely on to sense obstacles can be hacked by a setup costing just $60, according to a security researcher.

“I can take echoes of a fake car and put them at any location I want,” says Jonathan Petit, Principal Scientist at Security Innovation, a software security company. “And I can do the same with a pedestrian or a wall.”

“I can take echoes of a fake car and put them at any location I want,” says Jonathan Petit, Principal Scientist at Security Innovation, a software security company. “And I can do the same with a pedestrian or a wall.”

Using such a system, attackers could trick a self-driving car into thinking something is directly ahead of it, thus forcing it to slow down. Or they could overwhelm it with so many spurious signals that the car would not move at all for fear of hitting phantom obstacles. In a paper written while he was a research fellow in the University of Cork’s Computer Security Group and due to be presented at the Black Hat Europe security conference in November, Petit describes a simple setup he designed using a low-power laser and a pulse generator. “It’s kind of a laser pointer, really. And you don’t need the pulse generator when you do the attack,” he says. “You can easily do it with a Raspberry Pi or an Arduino. It’s really off the shelf.”

Petit set out to explore the vulnerabilities of autonomous vehicles, and quickly settled on sensors as the most susceptible technologies. “This is a key point, where the input starts,” he says. “If a self-driving car has poor inputs, it will make poor driving decisions.” Other researchers had previously hacked or spoofed vehicle’s GPS devices and wireless tire sensors.

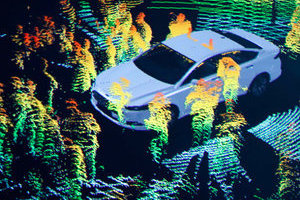

While the short-range radars used by many self-driving cars for navigation operate in a frequency band requiring licensing, lidar systems use easily-mimicked pulses of laser light to build up a 3-D picture of the car’s surroundings and were ripe for attack. It should be mentioned that security flaws are affecting more than 100 car models.

Petit began by simply recording pulses from a commercial IBEO Lux lidar unit. The pulses were not encoded or encrypted, which allowed him to simply replay them at a later point. “The only tricky part was to be synchronized, to fire the signal back at the lidar at the right time,” he says. “Then the lidar thought that there was clearly an object there.”

Petit was able to create the illusion of a fake car, wall, or pedestrian anywhere from 20 to 350 meters from the lidar unit, and make multiple copies of the simulated obstacles, and even make them move. “I can spoof thousands of objects and basically carry out a denial of service attack on the tracking system so it’s not able to track real objects,” he says. Petit’s attack worked at distances up to 100 meters, in front, to the side or even behind the lidar being attacked and did not require him to target the lidar precisely with a narrow beam.

Petit acknowledges that his attacks are currently limited to one specific unit but says, “The point of my work is not to say that IBEO has a poor product. I don’t think any of the lidar manufacturers have thought about this or tried this.” Sensor attacks are not limited to just robotic drivers, of course. The same laser pointer that Petit used could carry out an equally devastating denial of service attack on a human motorist by simply dazzling her, and without the need for sophisticated laser pulse recording, generation, or synchronization equipment.

But the fact that a lidar attack could be carried out without alerting a self-driving car’s passengers is worrying. Karl Iagnemma directs the Robotic Mobility Group at MIT and is CEO of nuTonomy, a start-up focused on the development of software for self-driving cars. He says: “Everyone knows security is an issue and will at some point become an important issue. But the biggest threat to an occupant of a self-driving car today isn’t any hack, it’s the bug in someone’s software because we don’t have systems that we’re 100-percent sure are safe.”

Petit argues that it is never too early to start thinking about security. “There are ways to solve it,” he says. “A strong system that does misbehavior detection could cross-check with other data and filter out those that aren’t plausible. But I don’t think carmakers have done it yet. This might be a good wake-up call for them.”

Axarhöfði 14,

110 Reykjavik, Iceland